Bayesian Tuning terhadap Model Pre-Trained PEGASUS untuk Peringkas Teks Informatif Berbahasa Indonesia

DOI:

https://doi.org/10.24002/jbi.v17i1.12915Keywords:

Peringkas Teks Abstraktif, PEGASUS, Optimasi Bayesian, Input Terformat, Input InformatifAbstract

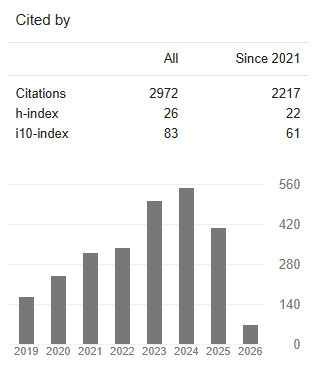

Penelitian ini mengeksplorasi peringkasan teks abstraktif untuk berita berbahasa Indonesia dengan melakukan fine-tuning pada model PEGASUS menggunakan Bayesian Optimization dan input kontekstual yang diperkaya. Dataset berisi 286.277 pasangan dokumen–ringkasan yang diambil dari JPNN.com, lengkap dengan judul dan kata kunci yang digunakan untuk membentuk input informatif. Evaluasi menggunakan ROUGE dan BERTScore menunjukkan peningkatan substansial dari informative input: +16.75% (ROUGE-1), +27.25% (ROUGE-2), +18.58% (ROUGE-L & ROUGE-LSUM), dan +2.7% (BERTScore-F1) dibandingkan dengan input reguler. Analisis saliency menunjukkan bobot kalimat kontekstual yang konsisten tinggi. Penerapan hyperparameter tuning Bayesian melalui Optuna memberikan kenaikan marginal (+1.21% ROUGE-1, +2.1% ROUGE-2, +1.38% ROUGE-L & ROUGE-LSUM, +0.23% BERTScore) yang dipengaruhi oleh jumlah trial terbatas (12) dan ruang pencarian yang sempit. Temuan ini menegaskan efektivitas desain input kontekstual dan potensi hyperparameter tuning untuk peringkasan berbasis Transformer pada bahasa dengan sumber daya terbatas.

This research explores abstractive text summarization of Indonesian news by fine-tuning the PEGASUS model using Bayesian optimization and enriched contextual inputs. The dataset contains 286,277 document-summary pairs scraped from JPNN.com, including titles and keyphrases used to construct informative input. Each section is marked with special tokens such as <TITLE>, <KEYPHRASES>, and <ARTICLE>. Evaluation using ROUGE and BERTScore shows that informative input substantially improves performance: +16.75% (ROUGE-1), +27.25% (ROUGE-2), +18.58% (ROUGE-L and ROUGE-Lsum), and +2.7% (BERTScore-F1) compared with regular input. Saliency analysis also shows consistently high sentence weights for contextual input components. Additionally, Bayesian hyperparameter tuning via Optuna yields marginal gains (+1.21% ROUGE-1, +2.1% ROUGE-2, +1.38% ROUGE-L & ROUGE-Lsum, +0.23% BERTScore) due to a limited number of trials (12) and a constrained hyperparameter search space. These findings demonstrate the effectiveness of contextual input design and the potential of Bayesian tuning to improve Transformer-based summarization for low-resource languages.

References

[1] W. Zarman, “Information Overload: Clarifying the Problem,” Indonesian Journal of Informatics Education, vol. 5, no. 2, pp. 1–5, 2021, doi: 10.20961/ijie.v5i2.56922.

[2] A. M. F. Yousef, A. Alshamy, A. Tlili, and A. H. S. Metwally, “Demystifying the New Dilemma of Brain Rot in the Digital Era: A Review,” Brain Sciences, vol. 15, no. 3, p. 283, 2025, doi: 10.3390/brainsci15030283.

[3] A. Eko Raharjo, “Profiling News Consumption on Social Media,” Jurnal Komunikasi Profesional, vol. 5, no. 4, pp. 320–334, 2021, doi: 10.25139/jkp.v5i4.3794.

[4] D. Yadav, J. Desai, and A. K. Yadav, “Automatic Text Summarization Methods: A Comprehensive Review,” SN Computer Science, vol. 4, no. 1, p. 33, 2022, doi: 10.1007/s42979-022-01446-w.

[5] Supriyono, A. P. Wibawa, Suyono, and F. Kurniawan, “A Survey of Text Summarization: Techniques, Evaluation and Challenges,” Natural Language Processing Journal, vol. 7, p. 100070, 2024, doi: 10.1016/j.nlp.2024.100070.

[6] H. Lucky and D. Suhartono, “Investigation of Pre-Trained Bidirectional Encoder Representations from Transformers Checkpoints for Indonesian Abstractive Text Summarization,” Journal of Information and Communication Technology, vol. 21, no. 1, pp. 71–94, 2022, doi: 10.32890/jict2022.21.1.4.

[7] Z. Alami Merrouni, B. Frikh, and B. Ouhbi, “EXABSUM: A New Text Summarization Approach for Generating Extractive and Abstractive Summaries,” Journal of Big Data, vol. 10, no. 1, p. 163, 2023, doi: 10.1186/s40537-023-00836-y.

[8] G. Tucudean, M. Bucos, B. Dragulescu, and C. D. Caleanu, “Natural Language Processing with Transformers: A Review,” PeerJ Computer Science, vol. 10, p. e2222, 2024, doi: 10.7717/peerj-cs.2222.

[9] F. F. Kartamanah, A. R. Atmadja, and I. Budiman, “Analyzing PEGASUS Model Performance with ROUGE on Indonesian News Summarization,” Jurnal dan Penelitian Teknik Informatika, vol. 9, no. 1, pp. 31–42, 2025, doi: 10.33395/sinkron.v9i1.14278.

[10] R. Alsultan et al., “PEGASUS-XL with Saliency-Guided Scoring and Long-Input Encoding for Multi-Document Abstractive Summarization,” Scientific Reports, vol. 15, no. 1, p. 26529, 2025, doi: 10.1038/s41598-025-11062-2.

[11] A. Bahari and K. E. Dewi, “Peringkasan Teks Otomatis Abstraktif Menggunakan Transformer pada Teks Bahasa Indonesia,” KOMPUTA: Jurnal Ilmiah Komputer dan Informatika, vol. 13, no. 1, pp. 83–91, 2024, doi: 10.34010/komputa.v13i1.11197.

[12] F. Koto, T. Baldwin, and J. H. Lau, “LipKey: A Large-Scale News Dataset for Absent Keyphrases Generation and Abstractive Summarization,” in Proc. 29th Int. Conf. Computational Linguistics (COLING), Gyeongju, Republic of Korea, 2022, pp. 3427–3437, doi: 10.18653/v1/2022.coling-1.303.

[13] B. Bischl et al., “Hyperparameter Optimization: Foundations, Algorithms, Best Practices, and Open Challenges,” Wiley Interdisciplinary Reviews: Data Mining and Knowledge Discovery, vol. 13, no. 2, p. e1484, 2023, doi: 10.1002/widm.1484.

[14] A. R. Lubis et al., “Enhancing Text Summarization with a T5 Model and Bayesian Optimization,” Revue d’Intelligence Artificielle, vol. 37, no. 5, pp. 1213–1219, 2023, doi: 10.18280/ria.370513.

[15] C. M. Muia, A. M. Oirere, and R. N. Ndung’u, “A Comparative Study of Transformer-Based Models for Text Summarization of News Articles,” International Journal of Advanced Trends in Computer Science and Engineering, vol. 13, no. 2, pp. 37–43, 2024, doi: 10.30534/ijatcse/2024/011322024.

[16] A. H. Victoria and G. Maragatham, “Automatic Tuning of Hyperparameters Using Bayesian Optimization,” Evolving Systems, vol. 12, no. 1, pp. 217–223, 2021, doi: 10.1007/s12530-020-09345-2.

[17] A. Dalal et al., “Text Summarization for Pharmaceutical Sciences Using Hierarchical Clustering with a Weighted Evaluation Methodology,” Scientific Reports, vol. 14, no. 1, p. 20149, 2024, doi: 10.1038/s41598-024-70618-w.

[18] Junadhi, Agustin, L. Efrizoni, F. Okmayura, D. R. Habibie, and Muslim, “Improving Evaluation Metrics for Text Summarization: A Comparative Study and Proposal of a Novel Metric,” Journal of Applied Data Sciences, vol. 6, no. 2, pp. 885–896, 2025, doi: 10.47738/jads.v6i2.547.

[19] R. H. Astuti, M. Muljono, and S. Sutriawan, “Indonesian News Text Summarization Using MBART Algorithm,” Scientific Journal of Informatics, vol. 11, no. 1, pp. 155–164, 2024, doi: 10.15294/sji.v11i1.49224.

Downloads

Published

Issue

Section

License

This work is licensed under a Creative Commons Attribution-ShareAlike 4.0 International License.

Copyright of this journal is assigned to Jurnal Buana Informatika as the journal publisher by the knowledge of author, whilst the moral right of the publication belongs to author. Every printed and electronic publications are open access for educational purposes, research, and library. The editorial board is not responsible for copyright violation to the other than them aims mentioned before. The reproduction of any part of this journal (printed or online) will be allowed only with a written permission from Jurnal Buana Informatika.

This work is licensed under a Creative Commons Attribution-ShareAlike 4.0 International License.